When you have several systems in your network, especially if you virtualize them like in a homelab or through your organization, having shared folders is extremely convenient and useful to make your work easier and faster.

I, for example, have a folder that I share among different virtual machines. This way, whatever gets downloaded in one system, is immediately available on the other system.

I have the folder located at a centralized file sharing NFS virtualized server. This folder is shared to a container that runs a torrenting app where I download files which get available at the same time to another container that can also use these same files for processing.

Basically, I centralize my files in a location that I later share among different devices and instances for convenience and functionalities.

Using a regular NFS or using a NAS File Sharing app?

In my case, I simply run a NFS sharing, because I don't have enough resources to run a NAS app, which would also do the same job. This is why I set this up in the most plain and basic way I could.

Setting up the sharing source

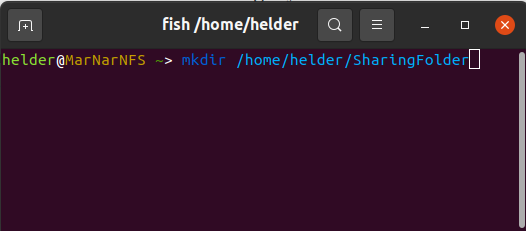

To do this, I first set up a simple Debian server, and set up a couple of folders in it that are intended to be shared among my internal devices.

Depending on how you plan to share these folders and your particular environment (home, office, external world, etc) you must consider how to set up permissions.

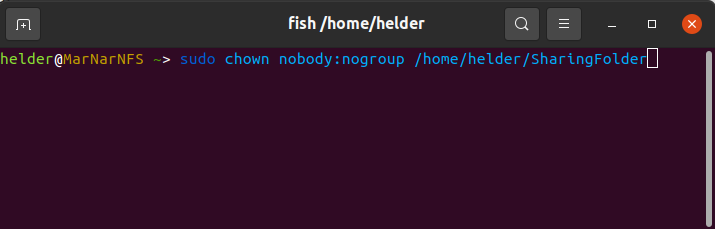

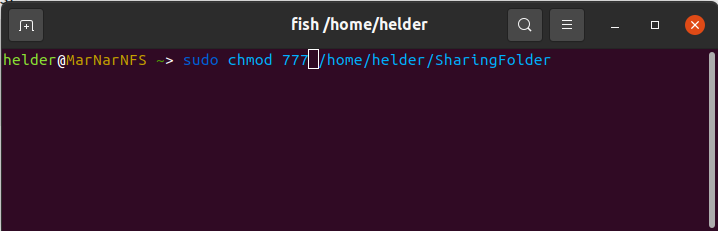

Assuming this is just for your internal network and you already have proper firewalls taking care of security, we will wide open this:

sudo chown nobody:nogroup /home/helder/SharingFolder

sudo chmod 777 /home/helder/SharingFolder

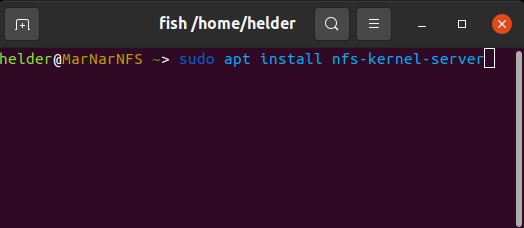

Once I have these folders, I had to install nfs-kernel-server, so my server can "understand" how to share things via NFS.

Depending on your distro you can set this up one way or another, but as I was using Debian I used:

sudo apt install nfs-kernel-server

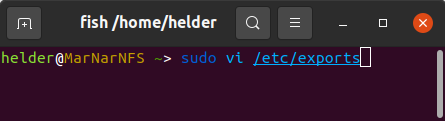

After this, I had to first provide the access to the folders I want to share and set this up to be persistent. This is made through the exports file.

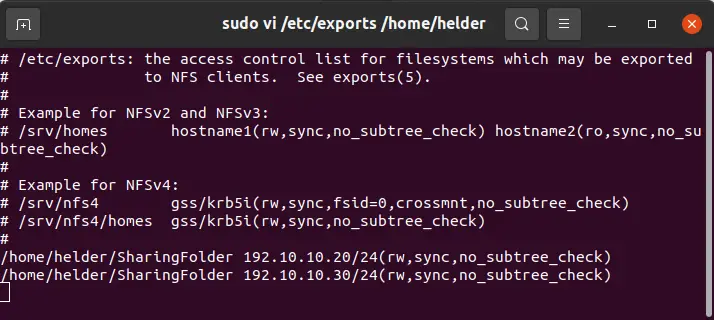

Also, I had to know to whom I wanted to share this with in my network, so I knew I had 2 different containers which were going to consume this folder, and previously identified their internal IPs: 192.10.10.20 and 192.10.10.30:

sudo vi /etc/exports

As you can see, I am giving read and write permissions to my containers as this was needed in my case. You might just give read-only permission instead, if it's something that should not make any changes to shared files.

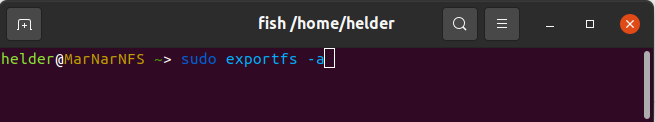

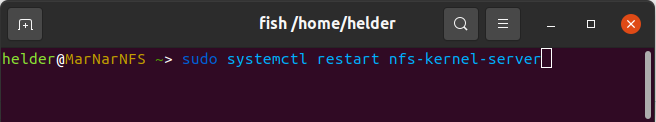

Lastly on this part of the process, you have to make your changes valid and functional. To do that:

sudo exportfs -a

sudo systemctl restart nfs-kernel-server

This will activate your exports and start sharing to the provided IPs.

Setting up the receiving sharers

Now, it's time to set up the other end, the consumers of these shared folders.

To do this, you have to log into each of the receiving instances and follow the same steps. For this article, I will only show one of them (192.10.10.20) but you have to do everywhere you want to have access to these.

Again, as I did this on Ubuntu containers, commands might vary on some other distros, but in essence it's the same.

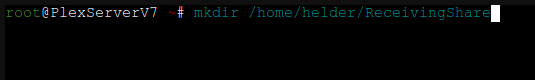

In concept, what needs to be done is set up a mounting point on your receivers. These mounting points will work as regular folders on your share, but the reality is they will be basically linked to the physical folder on the sharer (in my case Ubuntu container).

We start by creating the folders on the receiver machine:

mkdir /home/helder/ReceivingShare

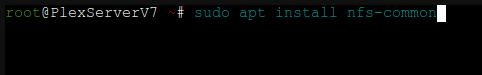

Now, we need to make sure NFS capabilities are installed on our receiving container, so we will do this:

sudo apt install nfs-common

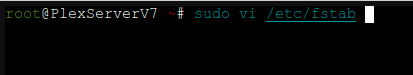

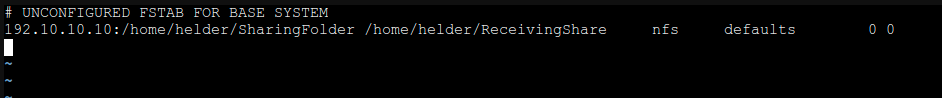

Now, assuming you would want this to be a permanent share on your container, so it can be accessible any time needed. Therefore, let's set up this as a persistent share in the container through /etc/fstab file:

sudo vi /etc/fstab

After doing this, I suggest rebooting any receiver container or machine, in order for the fstab to be tested and confirmed, and you should now have a common folder shared across your network with your configured devices.